Distributed Tracing with OpenTelemetry in Python

Updated March 2026: OpenTracing is now archived. Use opentelemetry-sdk and the opentelemetry-instrumentation-* auto-instrumentation packages for new projects. Jaeger natively receives OTLP (the OpenTelemetry protocol) as of Jaeger 1.35+. The concepts described here — spans, context propagation, trace backends — are identical in the OTel world.

In late 2019, the IBM services I was working on were generating logs. Good logs, correlated by request ID, structured JSON, shipped to a central aggregator. That was enough to debug most issues. What it couldn't tell us was why a request that touched five services was slow. Which service was the bottleneck? Was the latency in the first hop or the fourth? Logs give you a per-service view. Tracing gives you the full call graph.

Why Distributed Tracing

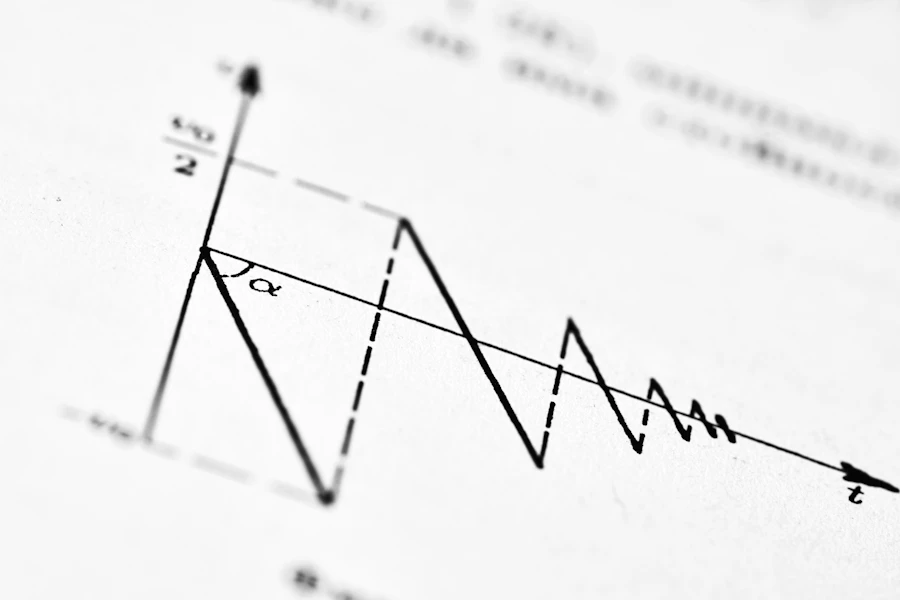

In a microservices architecture, a single user-facing request often fans out across multiple services. Service A calls B and C in parallel. B calls D. D calls a database. Without tracing, you have correlated log lines spread across four log streams, and you're manually reconstructing the timeline. With tracing, you have a single trace: a hierarchical tree of spans, each with a start time, duration, service name, and any metadata you attach. You can see in one view that the request took 800ms total, 750ms of which was in service D, 600ms of which was a slow database query.

OpenTracing in Python: The 2019 Approach

In late 2019, OpenTracing was the standard. OpenTelemetry had just been announced as the merger of OpenTracing and OpenCensus, but the SDKs were in early alpha and not production-ready yet. We used OpenTracing with the Jaeger client.

pip install opentracing jaeger-client flask-opentracing

Initializing the Jaeger tracer:

import opentracing

from jaeger_client import Config

def init_tracer(service_name: str) -> opentracing.Tracer:

config = Config(

config={

'sampler': {

'type': 'const',

'param': 1, # Sample 100% of traces. In prod, use probabilistic.

},

'local_agent': {

'reporting_host': 'jaeger-agent.monitoring.svc.cluster.local',

'reporting_port': 6831, # UDP compact thrift

},

'logging': True,

},

service_name=service_name,

validate=True,

)

return config.initialize_tracer()

tracer = init_tracer('key-manager-api')

Creating Spans Manually

The core abstraction is a span. A span represents a unit of work — an RPC call, a database query, a processing step. Spans nest to form a trace.

import opentracing

def wrap_key(key_id: str, plaintext: bytes) -> bytes:

with tracer.start_active_span('wrap_key') as scope:

span = scope.span

span.set_tag('key.id', key_id)

span.set_tag('plaintext.size', len(plaintext))

try:

# Call the HSM

with tracer.start_active_span('hsm.wrap', child_of=span) as hsm_scope:

hsm_scope.span.set_tag('hsm.endpoint', HSM_ENDPOINT)

result = hsm_client.wrap(key_id, plaintext)

hsm_scope.span.set_tag('key.version', result.version)

span.set_tag('success', True)

return result.ciphertext

except HSMError as e:

span.set_tag('error', True)

span.log_kv({'event': 'error', 'message': str(e)})

raise

start_active_span stores the current span in a thread-local scope, so child spans created within that context automatically become children of the current span without explicitly passing the parent reference. For async code (asyncio), you'd manage the scope differently — the context propagation is not automatic across coroutines.

Context Propagation: Inject and Extract

The span becomes a distributed trace when it crosses a service boundary. The trace context (trace ID, span ID, sampling decision) has to be serialized into the outgoing HTTP request and deserialized on the receiving end.

On the sending side, inject puts the trace context into a dict that becomes HTTP headers:

import requests

import opentracing

from opentracing.propagators import Format

def call_downstream_service(url: str, payload: dict) -> dict:

headers = {}

# Inject current span context into the headers dict.

span = tracer.active_span

if span:

tracer.inject(

span_context=span.context,

format=Format.HTTP_HEADERS,

carrier=headers

)

response = requests.post(url, json=payload, headers=headers)

response.raise_for_status()

return response.json()

On the receiving side, extract reads the context from the incoming headers and creates a child span:

def extract_span_context(request_headers: dict):

try:

return tracer.extract(

format=opentracing.Format.HTTP_HEADERS,

carrier=request_headers

)

except (opentracing.InvalidCarrierException, opentracing.SpanContextCorruptedException):

return None # No trace context in this request, start a new trace.

Without inject/extract, each service creates an isolated trace and you lose the cross-service call graph entirely.

Flask Auto-Instrumentation

Manually wrapping every route handler is tedious. flask_opentracing handles it automatically:

from flask import Flask

from flask_opentracing import FlaskTracing

app = Flask(__name__)

tracing = FlaskTracing(tracer, trace_all_requests=True, app=app)

@app.route('/v1/keys/<key_id>/wrap', methods=['POST'])

def wrap_key_endpoint(key_id: str):

# This request already has a span started by FlaskTracing.

# Access it via tracing.get_span(request).

span = tracing.get_span()

span.set_tag('key.id', key_id)

# ...

trace_all_requests=True starts a span for every incoming request, extracts any incoming trace context from the headers automatically, and finishes the span when the response is sent. You get the request URL, method, and status code tagged automatically.

Running Jaeger Locally

For development, Jaeger all-in-one is the fastest way to get a trace backend running:

docker run -d --name jaeger \

-p 6831:6831/udp \

-p 16686:16686 \

jaegertracing/all-in-one:1.14

Port 6831 is the UDP port the Jaeger client reports spans to. Port 16686 is the Jaeger UI. After running some instrumented code, open http://localhost:16686 and you'll see your traces.

Sampling in Production

const sampling at rate 1 (100% of requests traced) is fine for development but not for production under load. The overhead is low but not zero, and more importantly the volume of trace data at 100% sampling is enormous.

For production we used probabilistic sampling at 1%:

'sampler': {

'type': 'probabilistic',

'param': 0.01, # 1% of requests

},

For IBM's key management service, we also used adaptive sampling — the Jaeger agent can be configured to adjust the sampling rate to hit a target number of traces per second, which means you maintain visibility even during traffic spikes without overwhelming the collector.

The Transition to OpenTelemetry

OpenTelemetry was announced in May 2019 as the merger of OpenTracing and OpenCensus. The promise was a single, vendor-neutral observability standard with first-class support for traces, metrics, and logs. By mid-2020 we were migrating the Python services to the OTel SDK.

The core concepts are identical. Spans, context propagation, inject/extract — all the same. The API surface changed. Instead of opentracing, you import from opentelemetry. Auto-instrumentation moved to the opentelemetry-instrumentation-* package family, which covers Flask, requests, SQLAlchemy, and most of the common libraries.

If you're starting a new project today, skip OpenTracing entirely and go directly to OpenTelemetry. The SDK is stable, the instrumentation packages are comprehensive, and Jaeger, Zipkin, and every observability vendor can receive OTLP directly.

# 2026 equivalent

pip install opentelemetry-sdk opentelemetry-instrumentation-flask opentelemetry-exporter-otlp

The investment in understanding spans and context propagation from 2019 carried over completely. The implementation details changed; the mental model didn't.