I Built a Blog Platform in 12 Hours With Claude. Here's What That Actually Means.

I had 195 old blog posts sitting in a WordPress export from 2014–2019 and no desire to run WordPress again. I also had opinions: I wanted async Python, asyncpg, a real database schema, nginx I could actually read, and zero PHP. I did not want to install a theme, disable twelve plugins, or configure a cache plugin to do what a Cache-Control header already does.

So I built it from scratch. The entire thing — FastAPI backend, PostgreSQL, nginx on EC2, CI/CD pipeline, subscriber notifications, Prometheus metrics, WebP images, pretty URLs, deploy rollback — in about 12 hours of actual work spread across five days. 128 commits. 96 pull requests.

The CI/CD pipeline was running five minutes after the initial commit. Everything that came after was shaped by that decision.

Claude wrote most of the code.

What I want to talk about is what that actually means, and more specifically, what it doesn't mean.

The Breakdown

| Day | Hours | What shipped |

|---|---|---|

| Mar 20 | ~3.3 hrs | Repo structure, CI/CD pipeline, linting, PostgreSQL + asyncpg, security hardening, unit tests, nginx |

| Mar 21 | ~1.2 hrs | Images for 25+ old posts, Unsplash query tuning |

| Mar 22 | ~0.4 hrs | Post revision history, image optimization (4.3MB → 636KB) |

| Mar 23 | — | Nothing |

| Mar 24 | ~1.1 hrs | WebP + nginx content negotiation, Core Web Vitals (LCP, CLS, fonts), PageSpeed iteration |

| Mar 25 | ~6.4 hrs | Blog on main page, subscribe modal, RSS, digest emails, analytics, pretty URLs, Python 3.12, security hardening (15 PRs), Prometheus metrics, deploy fixes |

March 25 is where most of it lives. This whole project was built after hours — picked up after work, put down when it was time to sleep. That session started where it always does, after the day job wrapped, and ran late into the night. It's where the system went from "working" to "something I'd actually maintain."

By the Numbers

| Metric | Value |

|---|---|

| Total commits | 128 |

| Merged PRs | 96 |

| Actual working hours | ~12 |

| Lines of code (net) | 14,332 across 173 files |

| Pace | ~1,200 lines/hour |

| Time from first commit to working CI/CD | 5 minutes |

| API endpoints shipped | 18 |

| Unit tests written | 126 |

| Blog posts imported | 195 |

| Posts with images | 190 / 195 |

| Image compression (one post) | 4.3 MB → 636 KB (85% reduction) |

| Managed services used | 0 |

| WordPress plugins needed | 0 |

The 126 tests are the number I'd defend most. That's not a coverage vanity metric — every feature came with a test that actually exercised the failure case, not just the happy path. The test suite caught three issues before they reached production. On a solo project under time pressure, that discipline doesn't happen without something keeping you honest.

The 5 minutes to CI/CD is the one I'd hold up as the structural decision. Everything else in this table is downstream of it. Tests exist because CI enforced them. PRs are small because CI gates them. The deploy script bugs were caught because CI ran on every push and the pipeline had to stay green. You can't retroactively install that discipline — it has to be the second commit, not the fiftieth.

The Archive Problem First

Before I could have a blog, I needed the posts. This turned out to be its own project.

The source material was split across two places: a DynamoDB backup from an old personal site, and archive.org snapshots for everything else. The WordPress export XML was mostly useless — raw HTML with broken encoding, Cloudflare email-obfuscation artifacts baked in, HTML entities that had been double-escaped. For the archive.org posts, every snapshot had to be parsed with a custom HTMLParser that hunted for entry-content, post-body, and the-content divs, then converted the contents to Markdown. 53 posts needed a manual slug-to-URL mapping because the DynamoDB key names didn't match the archive.org paths. Six posts only existed in the DynamoDB backup and had no archive.org record at all.

The whole thing — fetching, parsing, deduplicating, converting to Markdown, normalizing the author name — ended up as blog/fetch_posts.py and blog/process_posts.py. Claude wrote both scripts. That part was collaborative in a way the API work wasn't: I had the source data and knew what it looked like, Claude wrote the parsers, I ran them, and we iterated on the output until it was clean.

The end state is 195 posts as flat .txt files with simple frontmatter:

Title: Setting Up Tornado with nginx

Author: Marie H.

Date: 2015-07-12

Image: /images/posts/tornado-nginx.jpg

Tags: python, nginx

============================================================

Content in Markdown...

blog/import_posts.py upserts on slug, so running it twice is always safe. The recovery was the longest part of the project — not the API, not the deploy script, not the security hardening round. Getting 10 years of posts out of two imperfect sources and into a format I could actually work with took more time than any single feature.

What I Built and Why It's Not WordPress

The architecture is deliberately minimal — and some of it was already decided before I wrote a line of code.

I've had a 3-year reserved EC2 instance running personal projects for years. It already had nginx, PostgreSQL, and a handful of other things on it. That influenced every infrastructure choice here: one host, no orchestration, Docker container talking to a local database over localhost. There was no reason to pay for a managed database or a separate compute layer when I already had a box that wasn't going anywhere. The "boring stack" wasn't just an aesthetic preference — it was also the most economical use of something I'd already committed to.

| Layer | Technology | Why |

|---|---|---|

| API | FastAPI + asyncpg | Async from the start; PostgreSQL native types |

| DB | PostgreSQL 5433 | One instance, blog posts + subscribers |

| Frontend | Static HTML/JS | No build step, no bundler, no framework |

| Proxy | nginx | Real config, real headers, no plugin |

| Infra | EC2 + Docker | One container, one reserved host, no orchestration overhead |

| CI/CD | GitHub Actions → deploy.sh | Push to main, done |

The subscriber system was the piece I expected to take longest. It didn't. Email verification with SES, token expiry, bounce/complaint handling via SNS webhooks, unsubscribe links in every email — all of it came together faster than I've ever done it with a framework. The async foundation mattered: asyncpg with $1, $2 positional parameters, connection pooling baked in, no ORM translating things I'd rather just write in SQL.

What I gave up: nothing I was using. WordPress gave me a visual editor, a plugin ecosystem, and a database schema I had no control over. I write posts in a text editor. I don't need the visual editor. The plugin ecosystem was mostly solving problems I don't have.

128 Commits, 12 Hours

The table above shows what shipped when. Here's the part that isn't visible in a commit log:

Foundation: The CI pipeline was commit two — five minutes in, before anything else. That meant the entire subsequent build happened under test. Every feature, every fix, every refactor had to pass lint and tests before it could merge. The FastAPI application, asyncpg pool, SES email flow, nginx config, and Docker rollback all came out of that first evening. It was a lot to ship in one session, but it held together because nothing merged without a green pipeline.

Hardening (scattered across days two through four, ~3 hours):

- Python 3.12 upgrade

- Transient vs permanent DB error distinction (503 for infrastructure failures, 404 for missing content — not the same thing)

- Admin key returning 403 instead of 503 when unconfigured

- SNS certificate cache with 24-hour TTL for AWS key rotation

- PII masking in log output (ri***@gmail.com, not the full address)

- HTML escaping in email templates (post titles and URLs, not just text content)

- SubscribeSource as a proper enum instead of an unconstrained string

- Preferences token expiry returning an error page instead of silently rotating the token

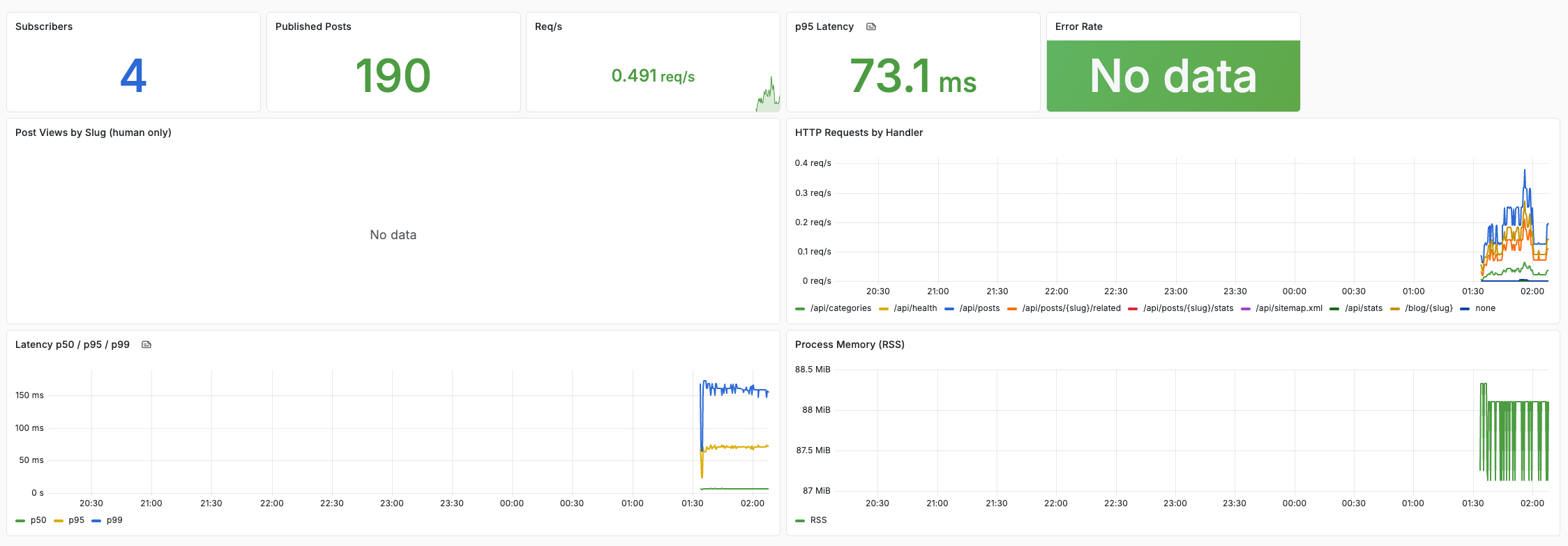

- Prometheus metrics at /metrics with subscriber count and post count as gauges — this plugs into a local Grafana instance I already run for other sites and IoT devices, so the blog's metrics land in the same dashboards as everything else

Infrastructure fixes that emerged (day five, buried in the ~6-hour session):

- deploy.sh was piping docker build through | tail -5 to limit output, which swallowed the exit code. A failed build looked like success; the old container kept running. Fixed by removing the pipe.

- deploy.yml auto-detection wasn't treating changes to deploy.sh itself as API changes. So a deploy script fix wouldn't redeploy the API. Fixed the grep pattern.

- pipenv install --system --deploy enforces that the lockfile was generated on the exact same Python patch version as the Docker image. My laptop runs a newer Python than the Docker image. Silent failure. Switched to pipenv sync --system.

None of these are glamorous. Most of them are the kind of thing that bites you three weeks into production when the deploy script silently succeeds while running the wrong image.

The Part About Claude

People reach for one of two framings when they talk about AI coding tools: either "AI writes the code, I review it" or "AI is a smarter autocomplete." Both are wrong for how I actually used it here as well as in my day to day job — where I'm actively trying to evangelize the same model to the rest of the team.

The pitch at work isn't "let AI write your code." It's: use it to increase infrastructure velocity. Smaller teams doing more, faster, with the same discipline. The end goal is further out — autonomic infrastructure, systems that self-heal and self-optimize with minimal human intervention — but you don't get there by going fully agentic before you've built the judgment layer. You get there by building that judgment layer first, in humans, through directed use.

"You have to know what good looks like before you can delegate the decision."

"AI didn't build this. I built it — 12 hours of directed work, with something that handled the weight so I could move faster. The decisions were mine. The architecture was mine. The judgment calls about what was acceptable and what wasn't were mine. Claude was the exoskeleton. I was still the one walking."

The better analogy is an exoskeleton. I'm still walking. I'm deciding where to go, what the destination is, when to stop and reconsider. The exoskeleton handles the load — the syntax, the boilerplate, the test fixtures, the edge cases I'd have to think through anyway. I move faster because I'm not carrying as much, not because something else is moving for me.

The distinction matters because fully agentic AI — give it a goal, let it run — produces different outcomes. It optimizes for completing the goal as specified. It doesn't know which shortcuts are acceptable and which ones you'll regret in three months. It doesn't know that you'd rather have a 503 than a silently swallowed error. It doesn't know that | tail -5 on a build command is a footgun.

What I did instead: I directed. Every feature, every fix, every architectural decision started with me knowing what I wanted and why. Claude handled the implementation — the actual code, the tests, the nginx location blocks, the SQL. I reviewed, caught the things that were wrong, pushed back on approaches that didn't fit the project's constraints. We went back and forth on the SNS verification logic, on when to mask vs when to log, on what "rate limit isolation in tests" should actually mean.

The obvious question is: what did Claude get wrong? The honest answer is that when things went sideways, the problem was the prompt, not the output. If I was vague about what I wanted, I got something vague back. If I described the problem precisely — the specific error, the exact behavior I wanted, the constraint I was working within — the output was almost always right.

This is just the Zen of Python applied to AI collaboration.

"Explicit is better than implicit.

Sparse is better than dense.

If the implementation is hard to explain, it's a sign the prompt was wrong."

The times I had to iterate were the times I hadn't been specific enough about what "done" looked like. The discipline of being precise about a problem — before handing it off — turns out to be the same discipline that makes you a better engineer regardless of the tools.

The 96 PRs aren't because Claude is slow. They're because CI was running from minute five, which meant every change had to be small enough to pass tests before it merged. That constraint is load-bearing. A giant diff with no CI would have hidden the deploy.sh pipe issue, the rate limit test collision, the vacuous Turnstile assertion. Small PRs against a green pipeline caught all three.

What Surprised Me

The testing discipline was stricter than what I'd have written alone. Every feature came with unit tests — happy path, failure path, edge cases. The test suite caught three issues that would have reached production: an HTML escaping bug in email templates, a rate limit collision between test classes sharing an IP, and a Turnstile test that passed vacuously because the secret was empty and verification was being skipped entirely.

That last one is worth naming: the test was asserting that an invalid token returns 400. It did return 400 — but only because the route was skipping verification entirely due to an unconfigured secret. The assertion was true for the wrong reason. We caught it because the test also used patch.object to verify the mock was actually being called. That's the kind of edge case that gets missed when you're writing tests after the fact.

The security hardening round was also more thorough than I'd have gotten to on my own in this timeframe. PII in logs is the kind of thing that sounds obvious in retrospect and takes three minutes to fix — but you have to notice it first. The SNS certificate cache TTL is a real issue (AWS rotates keys; a 24-hour TTL without expiry means you'd eventually be rejecting valid webhooks). The html.escape(quote=True) distinction for URL attributes versus html.escape() for text content is exactly the kind of subtle XSS surface that's easy to get wrong.

What's Next

This is a solid MVP. The subscriber system works, the content is live, the pipeline is green. What's missing is deliberate — search, paid tiers, sponsorships — and those are on a roadmap, not a backlog. There's a difference. A backlog is a list of things you might someday do. A roadmap has a goal and a sequence: organic traffic first, then subscribers, then revenue. The full roadmap is in the README.

The platform being custom-built means the roadmap items are actually buildable in the same way this was — a few hours, directed work, tested incrementally. That's the compounding effect of having a foundation you understand.

What It Doesn't Replace

Writing. Specifically, writing posts.

Claude can write in my voice if I ask it to. It can produce technically accurate content about things I've done. What it produces is not what I would write, because it doesn't have the failures I didn't mention, the thing that took four hours to figure out that I didn't document anywhere, the context that makes a detail worth including or not.

The posts that land well are the ones where the specificity is real — "the Akamai sensor data was the longest part," "37 variables needed the fix," "LGN014 was the error code and it meant exactly this." That specificity comes from actually having done the thing.

"Claude doesn't know what took four hours. It doesn't know which failure you left out, or why the detail you included is the one that matters. That knowledge lives in the person who did the work. You can't prompt your way to it."

I can direct Claude to help me write about work I've done. That's useful for structure, for pulling out which details matter, for turning rough notes into paragraphs. It's not a replacement for having done the work and knowing what was hard.

12 Hours

128 commits. 195 old posts imported and live. A subscriber system that handles bounces and complaints. Prometheus metrics. A deploy script with rollback. A test suite with 126 tests. Security headers I understand because I wrote them (or reviewed every line of what got written).

I would not have shipped this in 12 hours without Claude. I would not have shipped it in 12 hours with a fully agentic system either — I'd have gotten a working prototype that made choices I'd spend the next six months unwinding.

The right tool for what I wanted to build was me, with an exoskeleton. The exoskeleton handles the weight. I still have to know where I'm going — and the first place I pointed it was at a CI pipeline, because without that, nothing that followed would have been trustworthy.

The site is at cloudista.org. The code is open source at github.com/morissette/cloudista — nothing proprietary, nothing novel, just a boring stack chosen for the right reasons and built incrementally with every change tested before it landed.

195 posts recovered, imported, and live. Pipeline green. The foundation is solid. What comes next is just writing.